Controversial technology

The Metropolitan Police have announced they will shortly begin the operational use of Live Facial Recognition Technology, saying that it will “tackle serious crime and help protect the vulnerable”. Human rights, civil liberties and privacy campaigners, including Liberty and Big Brother Watch, call the move “dangerous, oppressive and completely unjustified” and “an enormous expansion of the surveillance state”.

Whilst controversy rages, facial recognition technology is being legally marketed and used in England and Wales right now. Technology companies, general business, schools and employers in all areas are asking how they can protect themselves when dealing with this new technology.

Here comes the science bit…

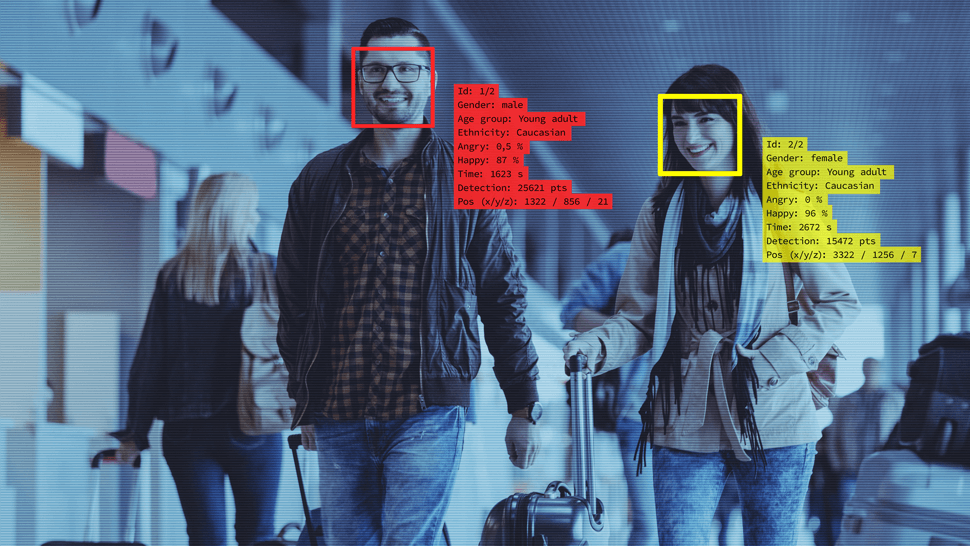

Facial recognition technology (FRT) enables computers to recognise faces in a photograph or video. A unique biometric template of each image is produced and then matched to images already held on a database. This “static” process essentially matches one image to another submitted beforehand, dispensing with the need for physical human checks.

Contact our Licensing and Regulatory team now.

FRT is the technology behind the Apple iPhone’s Face ID and the automated passport control booths many people have already encountered. It has also been deployed by a variety of businesses when granting access to premises.

Live Facial Recognition (LFR) is the real time automated processing of multiple images taken from CCTV, which then prompts a human intervention when a match is found. This “moving” process essentially checks all the faces in a crowd, looking for people of interest to the user of the technology whose images are already held on a “watch-list”. When a match is found, it must be checked by a human being before any approach is made to the person of interest.

The Metropolitan and South Wales Police Services have deployed live FRT on many occasions in the last couple of years – it has also been used by private companies, such as pseudo-public space owners and retailers, searching the general public for people who match their own watch-lists.

If FRT is legal, why should I be concerned?

For two reasons. It may be banned in future and users may be fined and/or sued in the meantime if they breach existing laws.

There are so many issues with FRT design that it has been called “the plutonium of artificial intelligence” by a researcher in the Fairness, Accountability, Transparency and Ethics Group at Microsoft Research in Montreal. Such are the concerns about FRT that there have been worldwide calls for statutory codes, legislation, even an outright ban.

The City of San Francisco banned LFR in July 2019. The EU Commission is currently considering a three to five year ban on FRT in public areas throughout Europe (reportedly removed from draft issued on Jan 29). Brexit aside, the House of Commons Science and Technical Committee has urged police and local authorities to suspend LFR. The hope is that temporary bans will allow legislation to catch up with developments in technology.

Even the manufacturers themselves accept that the technology is developing but argue it needs to be used to facilitate improvements in the identified flaws. They lobby against bans but welcome engagement with law-makers. Amazon’s Jeff Bezos has even claimed his company is drafting its own proposals for legislation.

In the meantime, retail technology companies market relatively inexpensive FRT to private companies and individuals. When private users of FRT get it wrong, it is they, not the vendor, who get sued and fined.

For example:

- An electrician in New Zealand was recently awarded compensation for wrongful dismissal when his employers deployed FRT incorrectly

- Apple itself reportedly paid out more than $1bn in 2019 to a student in New York who was mistakenly arrested after FRT wrongly identified him in one of their stores

- Even in China, where FRT is mainly in the news in connection with the authorities’ treatment of the Uighurs in Xinjiang, FRT is the subject of an ongoing claim for compensation by a customer denied access to a theme park.

In England and Wales, compensation claims can be brought for mishandling FRT. Separately, the Information Commissioner has the power to issues fines of up to £17m (€20m) or 4% of global turnover.

Now I’m interested, what should I look out for?

To quote the Surveillance Camera Commissioner, FRT is “a complex area with significant media and political interest here and across the globe. The use of this technology is not just a data protection issue, it’s not just a surveillance issue, it’s not just a biometrics issue and it’s not just about technical, operational and ethical standards. It is all of those and more”.

Pending specific legislation or statutory codes of practice, businesses and individuals must have regard to a wide range of existing laws before buying, selling or using FRT.

To help clients avoid fines and compensation claims, we offer specialist advice on those laws to, for example:

- Manufacturers and retailers on the terms and conditions of the sale of their FRT package and their own statutory obligations

- Businesses, such as shopping centres or retailers, on what to look out for before purchasing FRT systems

- Users, such as schools and other occupiers of premises whilst using FRT.

Facial recognition technology is big business. It is legal and competitively priced. It has the capacity to change lives for good or ill, depending on where you stand on the privacy/law enforcement debate articulated by the Metropolitan Police and civil liberties campaigners this week. Businesses just need to take care in how it is marketed and used.